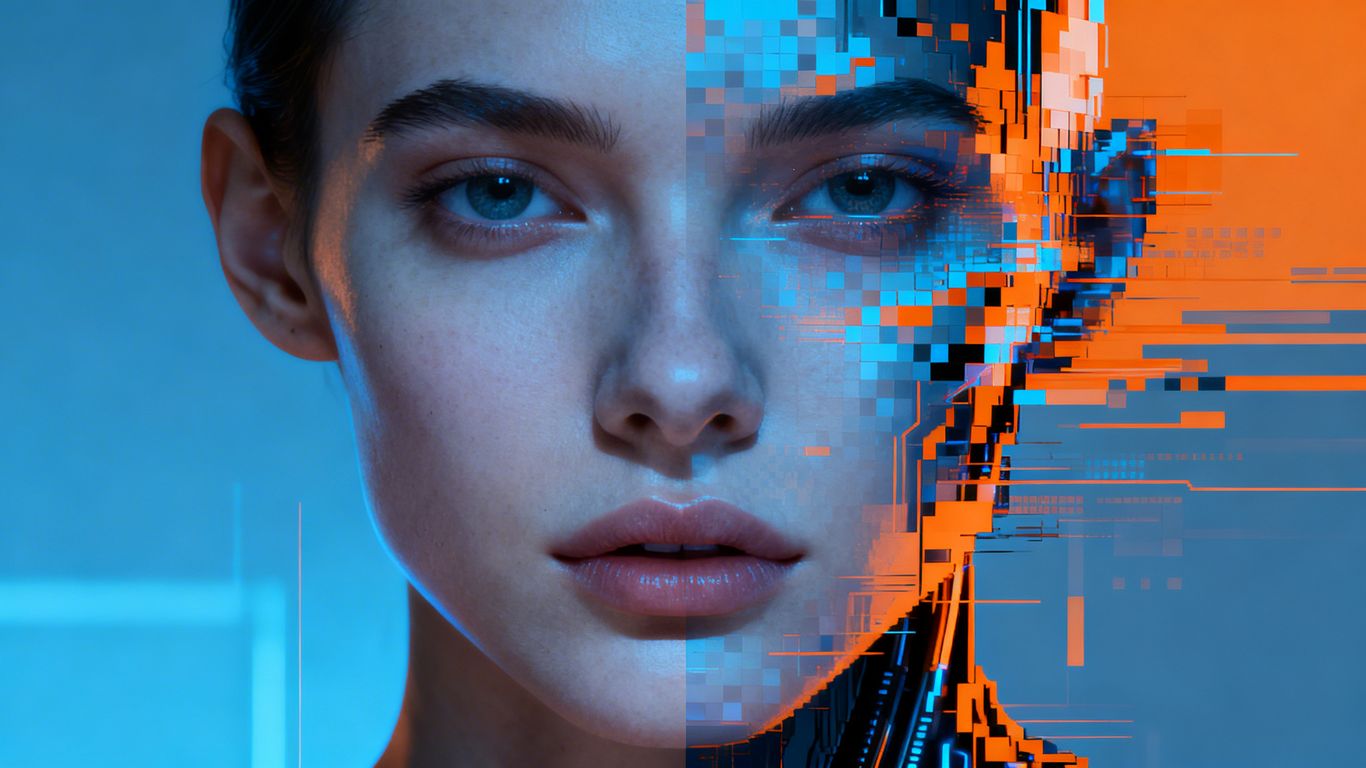

The proliferation of lifelike AI-generated content presents a significant challenge for discerning truth from fiction online. As artificial intelligence tools become more sophisticated, distinguishing between real and synthetic media is increasingly difficult, leading to a growing reliance on AI detection tools. However, these tools themselves are not infallible, creating an ongoing battle to maintain digital integrity.

Key Takeaways

- AI detection tools show promise but have significant weaknesses, struggling with complex or subtly altered content.

- The rapid advancement of AI generation means detection tools are in a constant 'arms race' to keep up.

- Low-quality or obviously flawed AI content is often easier to spot, but more sophisticated fakes are harder to detect.

- While AI audio detection appears more robust, visual and video detection remains a significant challenge.

- Ultimately, critical thinking and source verification remain crucial for users navigating the digital landscape.

The Evolving Landscape of AI Detection

AI detection tools are increasingly employed across various sectors, from financial institutions combating fraud to educators identifying plagiarism. These tools analyse content for hidden watermarks, compositional errors, and other digital clues left by AI generators. However, a comprehensive review of over a thousand tests revealed a mixed bag of results. While many tools could identify basic AI fakes, their accuracy faltered with more complex or subtly manipulated content.

Strengths and Weaknesses of AI Detectors

Many AI detectors perform well when identifying straightforward AI-generated images, often flagging unnatural lighting, perfect composition, or distorted features like hands. However, they frequently struggle with more nuanced fakes, such as those created by advanced models like Gemini or ChatGPT, with some tools even failing to detect content they themselves generated. The effectiveness of these detectors is also hampered by the constant evolution of AI generation techniques. As AI generators improve, detectors must continuously update their models to remain effective, leading to an ongoing 'arms race'.

The Challenge of AI Video and Audio

AI-generated videos, particularly following the release of tools like OpenAI's Sora, pose a significant threat. While some detectors can identify AI videos, their success rates vary. The analysis of video and audio content is crucial for security, with potential for AI-powered voice impersonations and realistic video conferencing fakes. While AI audio detection appears to be more advanced, with tools like Sensity and Resemble.ai showing strong performance even with altered audio, video detection remains a more significant hurdle.

The Importance of Critical Evaluation

Experts caution that AI detection tools should not be relied upon for definitive rulings. The risk of false positives, where real content is mistakenly flagged as AI-generated, can sow doubt and disrupt the dissemination of accurate information. For instance, a real image of conflict casualties was dismissed by some as AI-generated, highlighting the potential for doubt to spread even when evidence suggests otherwise.

Furthermore, AI can be used to subtly alter real images, creating fakes that are difficult for both humans and detection tools to identify. While detectors generally perform better at identifying genuinely real images and videos, the blending of real and AI-generated elements presents a formidable challenge.

Moving Forward: Beyond Detection

As AI technology advances, the focus is shifting towards more robust verification methods. This includes exploring digital watermarking embedded at the point of capture and developing AI tools that can automatically flag their own creations. However, the most sustainable solution lies in fostering digital literacy. Users are encouraged to move beyond surface-level analysis and to critically evaluate the source, context, and provenance of information, treating digital media with the same skepticism applied to written text. The ultimate goal is to cultivate a more discerning online audience, capable of navigating the complexities of AI-generated content.